I spent some time in Sri Lanka over summer and ran into someone whose company has begun using AI —everyone I met seemed to be in love with OpenAI. Before I could get on my hobby horse about plagiarism and cheap hallucinations he noted that they were very clear about ‘fencing off’ the content they feed the algorithm, so that it won’t run amok. That’s a more sensible approach to have on this journey.

However, the chaotic AI arms race is on even here, with AI being greenlighted by businesses. One post talks up the “plethora of new job opportunities,” while several groups are popping up to get their hands around this. Dr. Sanjana Hattotuwa is one of the few who urges everyone to slow down and consider how generative AI and LLMs might influence such things as ‘truth decay,’ among other things. He calls for a ‘regulatory sandbox’ approach.

PLAGIARISM? This is more than a copy/paste problem. Consider voice theft. You may have heard a lawsuit by Scarlett Johansson against OpenAI for allegedly using her voice. (For a deep dive, read WIRED which covers the legal implications well.) It’s an intellectual property right violation–‘cloning’ someone’s voice without permission. Sure, young people will always be infatuated with algorithms that could produce anything – essays, books, graphics, software, presentations, videos etc– in a few clicks. If we don’t address this, I told my friend, the problems will go deeper than plagiarism.

Young people will begin to believe that they can’t be as ‘creative’ as the tool, and over time, give up on ideation. Slaves to the tool, we would be encouraging them to outsource everything. First, because the can. Second, because they will be unable to do what they were once capable of doing. Remember how we once knew every phone number of our friends and cousins? What made our brains do that?

HILARIOUS THREE-LEGGED PROBLEM. Before we closed for summer, my students were experimenting with AI images in Bing, now a AI-powered search engine. They also discovered that the Photoshop-like tool, we use in class, Pixlr, had a similar option. But here’s what they ran into:

Take a look at these images used by some students for an eBook assignment I gave them. (I’ve written about how each semester my 7th graders come up with about 125 books.)

Exhibit A:

Spot anything odd about this cover? It’s not just the plastic-like muscles.

I call this the three-legged football player problem. The OpenAI tool in Bing goes off the rails at times. But instead of being annoyed with the outcome, I savored the moment. A wonderful opportunity to teach visual literacy.

Many things about this picture are wrong. Yes, the muscles look plastic, or at least over-Photoshopped. The gold marker is out of place. But the feet? There’s a third leg popping out of hid shoulder!

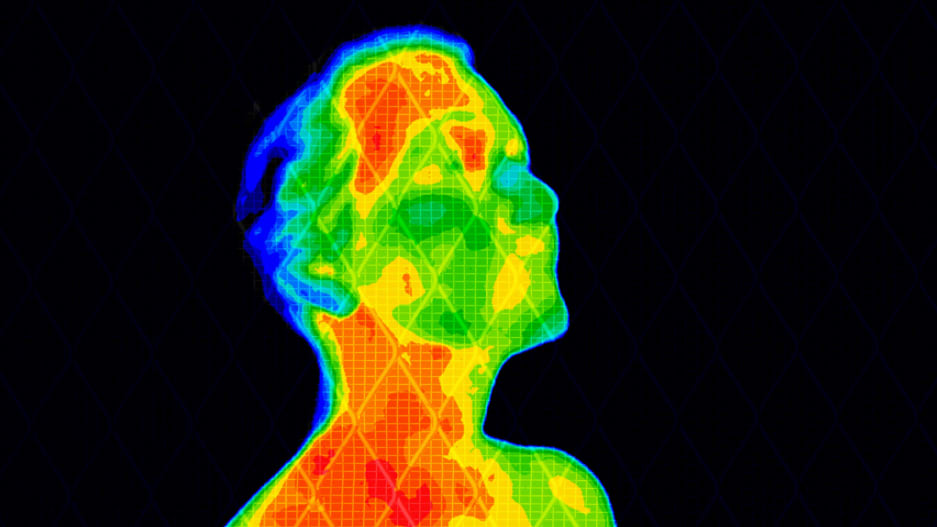

Exhibit B:

This student’s eBook was about an off-duty soldier in a war set in the future. Notice anything weird here?

Yeah, the gloves. Looks like they came from from Home Depot! Anything else? Check the flag on his shoulder.

On May 14th, I presented a similar topic in a TED-Talk like event I had put together at my school. (We called it BEN Talks, being Benjamin Franklin High School.) My point was that the elephant in the room today is not even an elephant. It’s a parrot —the ‘stochastic parrot’ that researcher Emily Bender and others warned us about. It’s luring us down a dangerous path, and will pose a huge threat.

I remember a time when we we were fed the hype of how the ‘Internet of Things’ (IOT) would rescue us. (Have to admit, I swallowed that as well.) According to this glowing theory, a malfunctioning part on an aircraft making a long distance flight would ‘communicate’ with its destination, so that technicians would be ready to replace it when the plane lands. (The pilot would not even know; the ‘things’ would talk to each other.) IOT is here, but fortunately its not for every-thing. Your fairy lights can talk to the Bluetooth speaker, for all I care. But spare me the Apple watch that can tell my fridge what I need to cook for dinner because of some health condition it tracks through my skin.

If IOT was supposed to make our lives safer and convenient how come a window of a Boeing aircraft could come loose and drop out of the sky, without any warning? Why didn’t the loose nuts text message the wizards at Boeing to tell them so?

We were sold on some misleading, overhyped ‘intelligence,’ and no one dared question it. If you did, you were a fringe Luddite who needed to be voted off the island. I’m sorry but I got to this island because there was a pilot and a co-pilot on board—and not some aviation algorithm.

If you’re a student, you’ll love this.

On a related note, I support a Writers’ website, Write The World, which encourages young people to express their creative writing. Here’s one submission by a freshman at a high school.

Powerful poetry about AI’s ‘knowledge.’

So what do we do, besides write poetry and articles bemoaning the awkward, overhyped pathway we are being led? I think we should join the resistance to three-legged athletes, put on proper gloves, and take on the tech bros feeding us this pipe dream. There are more urgent, humanitarian needs that could be addressed through technology.

In a related development, speaking of work, researchers at ASU are looking at how robots could augment, rather than replace workers in certain jobs. This story,

In a related development, speaking of work, researchers at ASU are looking at how robots could augment, rather than replace workers in certain jobs. This story,

Alexa is supposed to be in ‘listening mode’ only when the speaker is addressed. Last week, however, Amazon confirmed that some of its

Alexa is supposed to be in ‘listening mode’ only when the speaker is addressed. Last week, however, Amazon confirmed that some of its  And then there’s the not-so-funny side to having an app for everything. Just take a look at the recent lawsuits and missteps by tech companies.

And then there’s the not-so-funny side to having an app for everything. Just take a look at the recent lawsuits and missteps by tech companies.